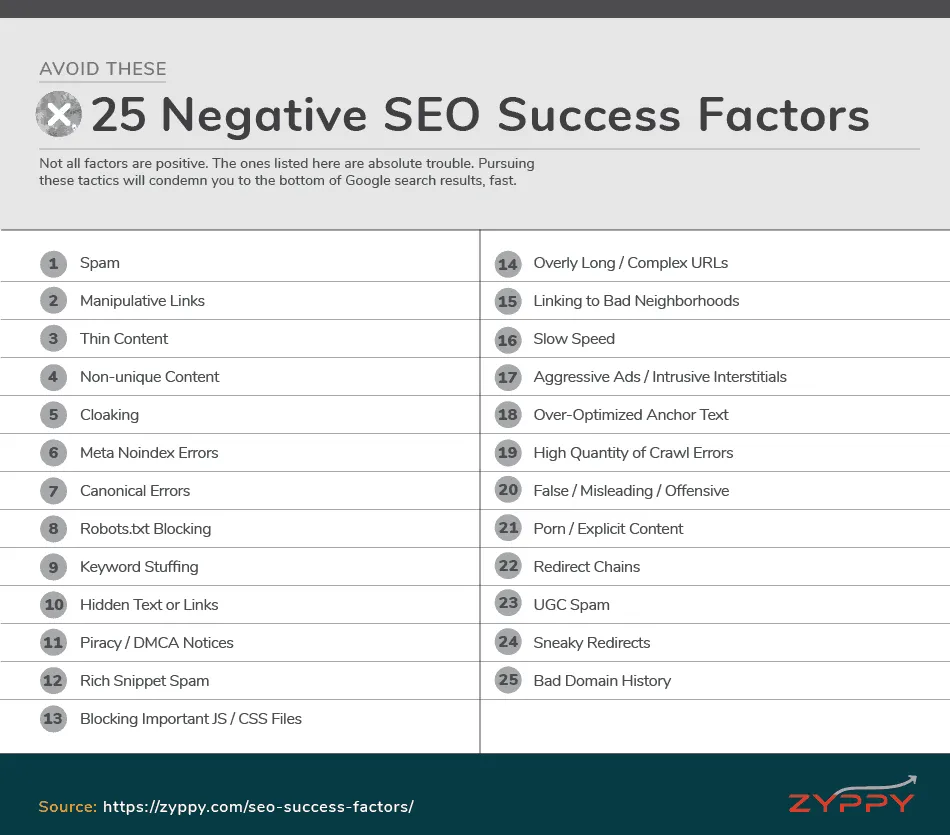

Not all success factors are positive. The ones listed below possess the power to damage your visibility. Proceed with caution.

Contents:

- Spam

- Manipulative Links

- Thin Content

- Non-unique Content

- Cloaking

- Meta Noindex Errors

- Canonical Errors

- Robots.txt Blocking

- Keyword Stuffing

- Hidden Text or Links

- Piracy / DMCA Notices

- Rich Snippet Spam

- Blocking Important JS / CSS Files

- Overly Long / Complex URLs

- Linking to Bad Neighborhoods

- Slow Speed

- Aggressive Ads / Intrusive Interstitials

- Over-Optimized Anchor Text

- High Quantity of Crawl Errors

- False / Misleading / Offensive

- Porn / Explicit Content

- Redirect Chains

- UGC Spam

- Sneaky Redirects

- Domain History

1. Spam

The whole point of Google is to deliver the opposite of spam, so producing spam isn’t going to help you rank.

Spam takes many different forms. It may include, but isn’t limited to:

- Spun / low quality content

- Doorway pages

- Low quality auto-generated content

- Scraped content

- Malware

If you’ve made it this far through SEO Success Factors, you know this is not what you want to produce.

2. Manipulative Links

Google hates unnatural links.

To be fair, unnatural links are less of a big deal ever since Google released Penguin 4.0, which is very granular and often likely to ignore bad links than penalize you for them.

That said, naughty linking practices remain a major cause of lower rankings, either through manual penalties or algorithmic actions.

Learn More

Learn to avoid link schemes and follow the rules of link building.

3. Thin Content

Content that adds little value, uniqueness, or substance qualifies as thin content.

Especially damning to Google are thin affiliate sites, which exist solely to lead folks to affiliate sites while adding little extra value.

4. Non-unique Content

While Google doesn’t penalize duplicate content, content that is non-unique can get filtered from search results.

Aside from following the advice earlier in this guide on duplicate content, it’s best practice to have at least 2-3 sentences up to a few hundred words of unique content on each page to have a chance of ranking.

Learn More

5. Cloaking

Cloaking is generally defined as the practice of showing certain content to users, while showing different content to search engines – typically for sneaky reasons.

Don’t do this.

Sometimes cloaking is fine if done for the right reason, but these are usually edge cases.

Learn More

6. Meta Noindex Errors

The meta noindex directive is a powerful tool that when used correctly helps with both crawling and indexing, as well as duplicate content issues.

But noindex can also be abused and cause unnecessary errors. True to its purpose, using a meta noindex on a page causes Google to drop it from its index, and it won’t rank.

In fact, meta noindex errors are one of the most frequent findings during SEO audits.

7. Canonical Errors

Another common error. If URL 1 has a canonical tag pointing to URL 2, then URL 1 isn’t going to be indexed or ranked (as long as Google respects the canonical.)

Additional canonical errors include pointing a canonical to a page marked “noindex”, which can cause both pages to be dropped by Google’s. Ouch!

Learn More

8. Robots.txt Blocking

Pages blocked by robots.txt—accidental or otherwise—likely won’t rank well in Google’s search results.

An important distinction is that while robots.txt prevents crawling, it does not stop a page from appearing in search results. The best way to control this is through the noindex directive.

9. Keyword Stuffing

Keyword stuffing is the art of stuffing keywords where keywords shouldn’t be stuffed. Enough?

Learn More:

10. Hidden Text or Links

Hiding text and links is a form of cloaking, because it shows something in the source code to search engines that users typically can’t see.

While this was much more common in the early days of SEO, it’s still around today—sometimes accidentally! Hiding text, and especially links, often means a fast path to the Google penalty box.

Learn More:

11. Piracy / DMCA Notices

Whether you run a pirate site or not, the number of valid DMCA copyright removal notices your site receives can lower your search rankings.

A single notice or two likely won’t hurt much, but a large number of such removal requests will likely hurt.

12. Rich Snippet Spam

Rich snippets are awesome when you want to make your search results stand out. Which is probably the reason so many people try to earn them with false information such as fake reviews and falsified event markup.

But spammy structured data can get your site penalized. Google even has a form where your competitors can report you.

13. Blocking Important JS / CSS Files

Allowing Google to crawl your JavaScript and CSS files is part of crawling and indexing, but one that many people overlook. When these files are blocked by robots.txt, Google has trouble rendering the page like a browser, and your rankings may suffer.

You can run a quick check of blocked resources using Google’s Fetch and Render tool, and address any problems.

Learn More:

14. Overly Long / Complex URLs

In almost all SEO correlation studies, the total length of the URL is correlated with lower rankings. This is also the case with the amount of numbers and special characters in the URL, e.g., https://example.com/887600o!jshfj#jklsing0098019-874

The converse is also true: shorter, cleaner URLs tend to rank slightly better.

Correlation is not causation, but this could be caused by:

- Deep folder structures, often far removed from high authority pages

- Superfluous parameters

- People being less likely to copy and share long, complex URLs

Whatever the reason, it often pays to keep your URLs clean and tidy.

15. Linking to Bad Neighborhoods

Just like Google’s system rewards sites that link out to high-quality sources, the system also “trusts sites less when they link to spammy sites or bad neighborhoods” (source).

A bad neighborhood is one filled with spam sites, or sites that have been penalized. Gambling, shady pharmaceutical sites, and porn are often targets. Linking to these sites aggressively can put a serious dent in your search traffic.

16. Slow Speed

We’ve covered the multiple outsized effects of making your site fast, but the opposite is also true. Slow sites can be degraded in search results.

When Google first included speed in their algorithm, it was only supposed to impact the slowest of the slow, the bottom 1% off all pages. Since then, SEOs have observed the speed effect as a smooth curve along all sites.

Don’t be pokey

17. Aggressive Ads / Intrusive Interstitials

Google is an ad company, and they understand ads fund the web. That said, there are two different ways that overly-aggressive ads can hurt your rankings:

- Google’s Top Heavy algorithm punishes sites with too many ads above the fold, or in the primary content area.

- The Intrusive Interstitial update punishes mobile sites with aggressive popups and interstitials

Feel free to use ads, but don’t be pushy about it.

18. Over-Optimized Anchor Text

Anchor text over-optimization is too much of a good thing.

Yes, it helps to have keywords in the anchor text of the links pointing to your site. Even exact match anchor text.

But SEOs long ago observed that when your anchor text becomes “too” optimized, rankings begin to fall. It’s as if Google can tell that your link profile doesn’t look natural, so they demote you in search results.

The best advice is to have a deep variety of anchor text links pointing at your site, and even avoiding dictating anchor text when asking for links.

Learn More:

19. High Quantity of Crawl Errors

Crawling errors are entirely normal, and most won’t hurt you. In fact, Google has specifically stated that 404s don’t hurt your rankings.

That said, a large number of crawl errors can be a sign of trouble. In the case of 404s, when they are a result of broken links—either internal or external—this can cause problems in the flow of link equity, create frustrating user experiences, and depress rankings from their real potential.

Not every error needs to be addressed, but a rash of bad crawl errors can spell trouble.

20. False / Misleading / Offensive

To crack down on Fake News, Google recently updated its algorithm to demote content that promotes “misleading information, unexpected offensive results, hoaxes and unsupported conspiracy theories” (source).

Google uses its army of Search Quality Raters to flag false and misleading information, and uses that data to train its machine learning algorithms to detect fake and misleading offensive content.

God save the Queen.

21. Porn / Explicit Content

This one’s pretty obvious, but if Google determines your site contains porn or explicit adult content, it will be mostly hidden in search results except for a very narrow range of search queries, and completely hidden with Safe Search turned on.

22. Redirect Chains

301 redirects are awesome! They get people and search robots where they need to go, and they even pass PageRank.

Seventeen 301 redirects chained together, with a 302 at the end, is not awesome.

In general, Google is known to follow only five redirects before giving up (your mileage may vary). Long redirect chains are also prone to break easily, leak PageRank, and generally depress rankings. For best results, shorten redirects to the fewest number of hops possible.

23. UGC Spam

It’s terrific when you produce great content, but not so much if you let users submit spam to your website.

User-generated spam can include spam comments, forum postings, and accounts on free hosts. The general rule is, if you host it on your site, you’re responsible for it. If you let your users run astray, it can hurt your rankings.

Learn More

24. Sneaky Redirects

Sneaky redirection is the practice of sending users to a page they didn’t expect, typically spam.

Google doesn’t like this, so don’t do it.

25. Bad Domain History

Even if your site is squeaky clean (it is, right?) you may still suffer in search results if you have a long history of spam or penalties. Once everything is cleaned up, it can take time for Google to crawl your content and reassess your site value.

SEOs often report that this process can take many months or more.

If your site has a bad domain history, it’s typically best to do a link audit and disavow, resolve any penalties, create new content, submit sitemaps, fix technical issues, and build new links.

If any of these negative success factors impact you and do the hard work of cleaning up, your rankings will return, hopefully sooner than later.