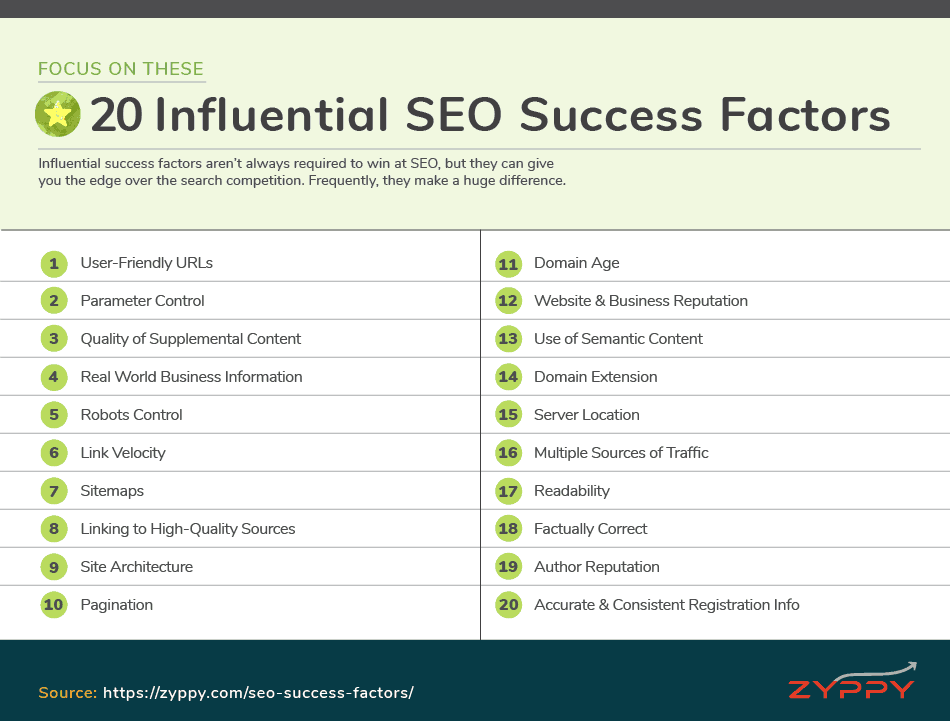

Influential success factors aren’t always required for successful SEO, but they can give you the edge over the search competition. Frequently, they make a huge difference.

Contents:

- User Friendly URLs

- Parameter Control

- Quality of Supplemental Content

- Real World Business Information

- Robots Control

- Link Velocity

- Sitemaps

- Linking to High-Quality Sources

- Site Architecture

- Pagination

- Domain Age

- Website & Business Reputation

- Use of Semantic Content

- Domain Extension

- Server Location

- Multiple Sources of Traffic

- Readability

- Factually Correct

- Author Reputation

- Accurate & Consistent Registration Info

1. User-Friendly URLs

Good URLs can make a big difference in all areas of SEO: crawling, ranking, CTR, and sharing. A few rules for URLs that SEOs have found true through testing and performance:

- Shorter URLs > Longer URLs

- Keywords in URLs help, but not too many

- 1-2 folder levels, tops

- Generally Avoid: Numbers, special characters, and keep parameters to a minimum

Example of a good URL:

- https://example.com/seo-success-factors/

Example of a mostly terrible URL:

- https://example.com/foo/5bbf54687s-641/i/i/?ref_=bb8?usid_=44null

Learn More

2. Parameter Control

Too many parameters can cause a lot of work for search engines, and also create a ton of duplicate content issues. The number of new URLs created by parameters can add up fast.

Consider this URL:

- http://www.example.com/?price-category=couch-furnature&sort-by=manufactuer&sort-order=asc&availability=null

Google provides a specialized tool within Search Console to deal with URL parameters. While the URL Parameter Tool is very powerful, configuring it correctly can be confusing for anyone other than expert users. For many marketers, it’s simply easier and safer to control parameters using rel=canonical.

3. Quality of Supplemental Content

Main Content is the part of a webpage that focuses on the main goal of the page. It can be a blog, product, news article, video or other content.

When we think of “content,” we typically think of Main Content.

Supplemental Content is the extra part of a webpage that is outside or only related to the main goal of the page.

Google’s Search Quality Evaluators Guidelines spend a lot of time discussing Supplemental Content. The quality of Supplemental Content can have an impact on user experience and how Google evaluates the page.

Low-quality Supplemental Content—such as annoying ads and poor navigation—work to frustrate the user, is often unrelated to the Main Content, and can lower engagement.

Good Supplemental Content adds value for the user, encourages exploration, and improves engagement. This can include:

- Navigation

- Related articles

- Additional products

- Comments

- Advertisements

- …and more

Learn More

4. Real World Business Information

Websites associated with verifiable, real-world businesses often have a leg up in Google Search Results. There are a number of reasons for this:

- Business citations help establish trust, and often links, for real-world businesses

- Users interacting with maps and voice search are more likely to engage with real businesses

- Having verifiable Wikidata for your business entity can be helpful

If your website is associated with a real-world business, it’s often helpful to build business citations to support it. This is even more critical if you do Local SEO.

5. Robots Control

While making your site crawlable and accessible to search engines is a critical ranking factor, often telling Google what not to index and where not to crawl is just as important.

Some areas where you may want to restrict search robots from crawling or indexing your site:

- Duplicate content

- Low-quality content

- Low-value search results pages

- Administration pages

- Members only areas

- Pages with privacy concerns

While robots.txt is often the first choice to bend bots to your will, it’s also a very blunt tool that lacks the refinement of robots meta directives, canonical tags, HTTP headers, or other methods of robots control.

Learn More:

6. Link Velocity

A common scenario: You build and launch your excellent content, earn a bunch of links and sail into rankings and traffic glory. Then, over time, your rankings start to slide. Eventually, even though you have far more links than your competitors, your content is relegated to the 3rd page of Google.

Link Velocity is often thought of as a Freshness Factor. The concept is the rate at which sites link to you can indicate how fresh and relevant your content is.

If your link velocity slows down or stops altogether, your content may no longer be fresh or worthy of ranking. If your link velocity speeds up over time, it may indicate your content deserves to be pushed up in search results.

7. Sitemaps

While sitemaps probably likely an actual ranking factor, using sitemaps effectively can play a role in SEO success, especially for larger sites.

Sitemaps can help Google find and prioritize content on your site, in ways that may help them to do it faster or more efficiently than they otherwise would.

Aside from cataloging the important pages on your site, sitemaps can also highlight specialized content, including:

- Images

- Videos

- Alternate language pages (hreflang)

- Google News

While most people think of XML sitemaps, HTML sitemaps can add additional value. For a cool example, check out the New York Times HTML sitemap.

Learn More

8. Linking Out to High-Quality Sources

In the past, Google has both indicated that external links are a ranking factor…

“In the same way that Google trusts sites less when they link to spammy sites or bad neighborhoods, parts of our system encourage links to good sites.” – Matt Cutts

While at other times, Google implies something more opaque:

“Our point of view, external links to other sites, so links from your site to other people’s sites isn’t specifically a ranking factor. But it can bring value to your content and that in turn can be relevant for us in search. And whether or not they are not followed, doesn’t really matter.” – John Mueller

Sometimes, SEOs are scared of linking out, fearing they’ll transfer authority elsewhere. The truth is, Google wants to reward sites that offer good experiences.

What’s more, multiple SEO experiments and correlation studies show that linking out to high-quality resources is correlated with higher rankings.

9. Site Architecture

Site Architecture in SEO refers to the organization of your website, navigation, and how the pages are linked together. An example would be “Homepage > Categories > Products” while defining how all of these different elements link to one another.

A clear Site Architecture not only helps Google with crawling, but also helps distribute topical link authority while helping users with navigation. All of these elements are important.

- Cross-linking to related categories/products/pages

- Flat architecture: No more than three clicks to the deepest level

- Breadcrumbs

Learn More

10. Pagination

Pagination is another aspect with assists Google with crawling and indexing of your site, though it is not itself a direct ranking factor.

When you have multiple pages that run in sequence—such as category pages that list products or blog posts—proper pagination setup can help search engines to find all the relevant content, and signal that these pages are related to one another.

Pagination is especially important when the sequential page system isn’t clear, like a page with infinite scroll.

Learn More

11. Domain Age

One one hand, Google has clearly stated that Domain Age isn’t specifically a ranking factor.

On the other hand, Google has also stated that they may look at the date when they first crawled a website, or the age of links pointing to a site.

SEOs have often noted the difficulty in getting a newer site to rank for competitive terms, and called this period the “Sandbox.”

So while there’s nothing specifically stopping a new site from ranking, it’s typically easier for older sites to rank because they’ve had longer to build up link and authority signals. In fact, almost all SEO correlation studies find a relationship between older domains and higher rankings.

Learn More

12. Website & Business Reputation

In Local SEO, we know that citations and reviews make up two huge pieces of the ranking puzzle. It’s important for a business to get high-quality citations, and it’s important to earn authentic positive reviews to rank higher.

The concept of reputation extends to regular search results as well. Google Quality Rater Guidelines consistently score sites on perceived reputation. We know Google has included analyzing customer experience into its algorithm.

For any online business, real-world customer signals can have a positive or negative effect on rankings. This is especially relevant now that Google can measure, through phones, what stores people visit and how long they stay.

Earning quality citations, positive reviews, and delivering excellent real world and online experiences can make a difference to your online visibility.

13. Use of Semantic Content

Previously, we discussed the use of relevant keywords as an important ranking factor. But what about the rest of the content, i.e., the words, phrases, and topics Google expects to find with a specific piece of text?

SEOs debate, test, and argue about specific methods for determining what Google may use to score topic “aboutness,” or relevance. These include TF-IDF, LDA, Proof Keywords, Co-Occurrence, Entity Salience and other concepts.

These concepts, while different, all predict that specific keywords and topics are more commonly found together than other keywords/topics.

For example, if your search topic were “apple watch,” you’d expect to find certain keywords and phrases more often than others, such as:

- Models

- Series 3

- GPS

- Heart Rate

- … and more

Multiple SEO studies have found that higher ranking content tends to have more associated keywords and phrases, and adding related phrases can improve a page’s ranking.

14. Domain Extension

When people first get into SEO, they often ask if they should use a .com or .org (or .marketing!) for their domain name. The general answer is that you should choose a domain extension best suited to your business and branding, but it’s a little more nuanced than that.

Google tends to treat all generic Top Level Domains (gTLDs) equally. Examples of gTLDs include:

- .com – Accounts for 75% of all gTLDs

- .net, .org, info, .biz – Popular alternatives to .com

- .design, .tech, .online, app – Newer Brand gTLDs

- .edu, .gov, .mil – Restricted, Sponsored generic Top-Level Domains (sTLD)

- .london, .tokyo, .vegas – Region-specific extensions that are treated as gTLDs

In the end, most evidence indicates that the domain extension you use doesn’t really matter, with one huge exception: use of Country Code Top-Level Domains (ccTLDs).

ccTLDs—such as .hk, .au, .fr, and .in—are used by Google to geo-target your website to a specific region. This means that by using a ccTLD, you may influence your site’s ability to show up in search on a region-by-region basis.

Typically, if your website servers users in multiple countries, it’s sometimes better to use a gTLD and specify language/region variations using hreflang.

15. Server Location

Along with international targeting signals such as hreflang, and ccTLD, the location of your server may influence your search visibility within a specific region.

Using a server close to your visitors has two distinct advantages:

- While information over the internet can travel close to the speed of light, there are often multiple relays, switches, and cables the signal must pass through, especially over long distances. Having a server nearby can dramatically improve page load speed, which can benefit your SEO all around.

- Google may use your location server as a signal for geo-targeting.

Today, use of Content Delivery Networks (CDNs) and cloud infrastructure can dampen these effects by delivering content to locations all over the world. That said, if you serve users in a specific area, it can be helpful to have your server physically located nearby.

Learn More

16. Multiple Sources of Traffic

Almost every SEO correlation study that examines the issue shows a correlation between direct traffic and higher rankings. These studies inevitably lead to lots of controversy and debate.

Here’s the truth:

- Does Google use other sources of web traffic as a ranking signal?

- Nobody knows, and it doesn’t matter.

We know that Google watches and records what websites people visit and bookmark through the Chrome browser. It’s not a stretch to believe that they could use this information in their ranking algorithms.

In fact, there have been many experiments and anecdotal evidence of site seeing a boost in Google rankings after increasing site traffic through other means, such as Facebook ads, TV commercials, and viral Reddit posts.

Regardless of whether it’s an actual ranking factor, an increase in both direct and referral traffic helps your visibility in multiple ways. More eyeballs on your content can lead to more links and shares, which directly helps your SEO.

Furthermore, diversifying your traffic beyond Google makes you more resilient to the ups and downs of Google’s algorithm.

17. Readability

SEOs often focus on Reading Level as a possible ranking factor, but that misses the point.

Readability of your content is far more important.

Google is good at parsing words and sentences together, and we know that low quality or gibberish text tends to get demoted in search results. Conversely, content that is too condensed or advanced may not appeal to a wide portion of the web.

Your content should match your audience. Legal and medical journals should use appropriate jargon and syntax. Speaking to your audience is your best bet for matching user intent.

Most of the popular web is both scannable and written at about a 7th to 8th-grade level. In fact, this is the type of text that tends to earn the most featured snippets. In many cases, it’s also the type of content that earn links and shares.

Finally, readability better engages your audience and simply helps your content perform better.

Learn More

- How To Improve Content Readability And How That Will Affect Your SEO

- How to Use Yoast SEO: The Readability Analysis

- Grammarly: Free Writing Assistant

18. Factually Correct

There’s plenty of evidence that Google wants to rank sites that Factually Correct over websites that play fast and loose with the truth.

- First, there’s a Google research paper which defines Knowledge-Based Trust, which is a method of scoring websites on the correctness of their factual information instead of external factors like links.

- Then, in 2017 Google updated their Quality Raters Guidelines to included guidelines for rating pages with “demonstrably inaccurate content” as a Lowest Quality Page. Generally, when Google includes something in its Search Quality Raters Guidelines, it suggests they are trying to solve for it algorithmically.

- Finally, Googlers have recently made several statements indicating their desire to fact check web pages.

So not only can it pay to have factually accurate content, it can potentially hurt to have factually inaccurate content. (For more negative SEO Success Factors, see the last section.)

19. Author Reputation

We’re reaching the end of the list of Influential Ranking Factors, and here you find Author Reputation. Why is it near the end? To be honest, nobody knows how big of a deal it is.

The idea that Google wants to know who authored a page, and use that information for ranking websites, has been around a long time. In fact, most of this information comes from Google itself.

- A Google patent titled “Agent Rank” describes a system for scoring authors not only on reputation (based on how often the author’s work is cited by others) but on subject expertise as well. So if you become an author-authority on “car parts,” Google may rank your content on car parts higher. Most people today refer to this as Author Rank.

- Google executives and engineers have frequently been quoted discussed scoring content based on authorship.

- Authorship photos were supposed to be a step in this direction. Sadly, the photos were discontinued, but many believe Author Rank lived on.

- Finally, Google’s Search Quality Raters guidelines state that High-Quality Content makes clear “Who (what individual, company, business, foundation, etc.) created the content on the page you are evaluating.”

In the real world, it’s difficult to observe the impact of any Author Rank, but it could be significant.

In any case, it’s best practice to make it clear who created your content.

- If it’s an individual author, you can include an author byline and author page on the site. The author can also build up expertise by writing authoritative content around the web, and linking author profiles.

- If content is authored by a company or group, make sure your About and Contact information is clear and up to date, so it’s obvious who is responsible for your content.

Learn More

20. Accurate & Consistent Registration Info

To be honest, this factor should be listed in the “Could be Important” category.

Many SEOs over the years have speculated about the impact of private WHOIS domain registration. There are, in fact, a lot of reasons to speculate that Google monitors domain ownership and might care about WHOIS information.

- For example, this Google patent:

“… illegitimate domains may be identified. For instance, search engine 125 may monitor whether physically correct address information exists over a period of time, whether contact information for the domain changes relatively often, whether there is a relatively high number of changes between different name servers and hosting companies” - Older statements from Matt Cutts:

“…having whois privacy turned on isn’t automatically bad, but once you get several of these factors all together, you’re often talking about a very different type of webmaster than the fellow who just has a single site or so.” - Research showing a slight negative correlation between rankings and private domain registration.

- Recent anecdotal evidence showing the negative effects of accidental domain privacy.

- Experiments with expired domains showing how Google may not trust a change of domain ownership.

To be fair, Google has stated that “having whois privacy turned on isn’t automatically bad” – it’s more about the combined signals that indicate manipulation.

Furthermore, the effects of recent GDPR regulation throws a wrench in all this, as more and more website registration data becomes private by default.

At the end of the day, keep the following best practices in mind:

- Keep registration information up-to-date and consistent. If public, make sure the information is accurate, and if possible, tied to real-world information

- Frequent changes in WHOIS ownership information, including lapses during expiration periods, could be a signal for Google to trust the domain less.

- There are many legitimate reasons for using private WHOIS, but using a network of private domains to link together—especially if Google can tie them together—may spell trouble for your rankings.